Quantum Computing Demystified: What Software Engineers Actually Need to Know

A software engineer’s perspective on whether the quantum revolution is real—or just another hype cycle

I typically write about AI and system design—distributed systems, scalability patterns, the architectural decisions that keep production systems running. So why am I writing about quantum computing?

Because in 2025, quantum computing crossed a threshold that makes it impossible to ignore. Not because it’s ready to replace your cloud infrastructure (spoiler: it’s not), but because understanding what’s real versus what’s hype is becoming essential knowledge — especially if you build systems handling sensitive data.

This post is my attempt to cut through the noise based on my understanding after studying the recent advancements in the technology.

Disclaimer: My research on this topic has been moderate at best — haven’t done PhD, and you shouldn't dive too deep unless it's truly needed.

First, Let’s Kill the Buzzwords

You’ve probably heard quantum computing described as “computers that can be 0 and 1 at the same time” or “exponentially faster than regular computers.” These explanations are... not wrong, but they’re like describing a car as “a box with wheels that moves.”

Let me try a different approach.

The Casino Analogy

Imagine you’re at a casino, and instead of a coin that lands heads or tails, you have a magical spinning coin that stays spinning until you look at it. While it’s spinning, it has some probability of landing heads and some probability of landing tails—but it hasn’t “decided” yet.

That spinning coin is a qubit. The moment you look at it (measure it), it “collapses” into either heads or tails. Until then, it exists in a blend of both possibilities.

Now here’s where it gets interesting: what if you had 50 of these magical coins, and they were all connected in a weird way where the outcome of one affects the others? That connection is entanglement.

A classical computer with 50 bits can represent exactly one combination of heads and tails at a time. A quantum computer with 50 qubits can work with all possible combinations simultaneously — that’s 2^50 or about 1 quadrillion possibilities at once.

Why This Doesn’t Mean “Try Everything at Once”

Here’s the catch that most explanations skip: you can’t just read out all those possibilities. When you measure the qubits, you only get one answer.

The trick of quantum computing is using interference—like noise-canceling headphones for wrong answers. A quantum algorithm is carefully designed so that wrong answers cancel each other out while correct answers reinforce each other. When you finally measure, you’re much more likely to get the right answer.

This is incredibly hard to engineer. One tiny disturbance and your carefully orchestrated interference pattern falls apart.

So Where Are We Actually? (The Honest Version)

Let’s talk reality. I’ll give you the good, the bad, and the overhyped.

The Good: Real Progress is Happening

Error correction is finally working. Google’s Willow chip (late 2024) demonstrated something crucial: as they added more qubits to protect information, errors actually went down. This sounds obvious, but it’s the first time we’ve seen the theory work in practice at meaningful scale.

Multiple technologies are advancing. We’re not betting on one horse. Trapped ions, superconducting circuits, neutral atoms, photonics—each has different strengths. Competition is driving innovation.

First practical advantages are emerging. In March 2025, IonQ ran a medical device simulation that beat classical supercomputers by 12%. Small, but real.

The Bad: We’re Still in the “Wright Brothers” Phase

Qubits are incredibly fragile. They operate at temperatures colder than outer space (-273°C). A stray vibration, electromagnetic pulse, or even cosmic ray can destroy a calculation. Current error rates are roughly 1 in 1,000 operations. Classical computers? About 1 in 1,000,000,000,000,000,000.

The overhead is brutal. To get one reliable qubit (called a “logical qubit”), you need somewhere between 1,000 and 10,000 physical qubits running error correction. IBM’s target: 200 reliable qubits by 2029—requiring 100,000+ physical qubits.

Current machines are essentially lab experiments. The largest systems have a few thousand qubits. Useful applications need millions.

The One Thing That Should Actually Worry You: Cryptography

Here’s where quantum computing becomes genuinely relevant for working engineers. Not someday—now.

The 10000 feet view

Most internet encryption (RSA, used in HTTPS, VPNs, banking) relies on a simple fact: multiplying two huge prime numbers is easy; figuring out which primes were multiplied is essentially impossible for regular computers.

In 1994, mathematician Peter Shor proved that a quantum computer could solve this “impossible” problem efficiently. A powerful enough quantum computer could break RSA encryption in hours instead of billions of years.

Before You Panic

We don’t have computers powerful enough yet. Breaking RSA-2048 (standard encryption) would require roughly 4,000-20,000 logical qubits running millions of operations perfectly. With error correction overhead, that’s 2-20 million physical qubits of much higher quality than exist today.

Current best estimate for “cryptographically relevant” quantum computers: mid-2030s. And there’s significant uncertainty—could be later, could (less likely) be sooner.

Why You Should Still Care Today

Here’s the threat model that matters: “Harvest Now, Decrypt Later.”

Nation-state adversaries are almost certainly capturing and storing encrypted communications today, waiting for quantum computers to mature. If your data needs to stay secret for 10+ years (government, healthcare, financial, intellectual property), it’s already at risk.

What To Actually Do

The good news: Post-quantum cryptography (PQC) exists. NIST finalized new standards in 2024 based on mathematical problems that quantum computers can’t efficiently solve. These algorithms run on regular computers.

Practical steps:

Know your cryptographic inventory. Where does your system use RSA or elliptic curve cryptography?

Prioritize by data lifespan. What data would still be valuable if decrypted in 2035?

Start planning migration. The new standards (CRYSTALS-Kyber, CRYSTALS-Dilithium) are ready. Libraries are maturing.

For high-security applications: Consider hybrid encryption—using both classical and post-quantum algorithms during transition.

For most consumer apps, you have time. For government, healthcare, or finance? This should be on your roadmap now.

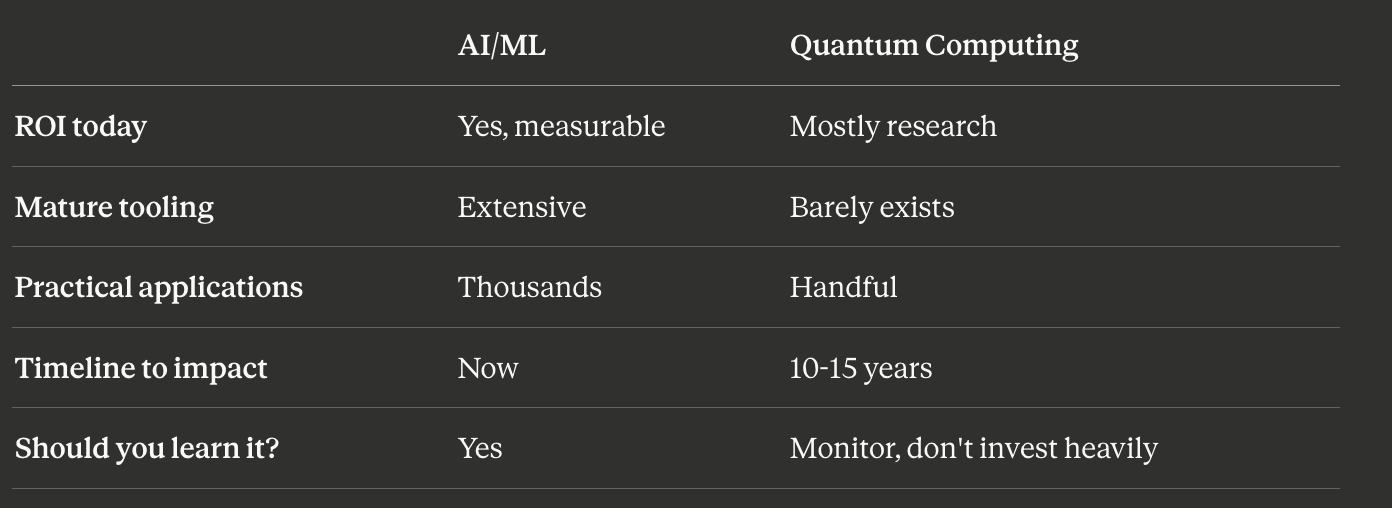

Quantum vs. AI: Where Should You Focus?

Since my usual beat is AI and system design, here’s my honest comparison:

My recommendation: Unless you’re working in cryptography, drug discovery research, or certain niche optimization problems, keep quantum on your “interesting to watch” list, not your “invest significant time” list.

The exception: post-quantum cryptography migration. That’s real, actionable, and relevant now.

The Bottom Line

Is quantum computing real? Yes. The physics works, algorithms exist, and progress is happening.

Is it overhyped? Also yes. Timelines are consistently overestimated, and “breakthrough” announcements often don’t survive scrutiny.

What should engineers do?

Don’t ignore it—the cryptography implications alone make it worth understanding

Don’t invest heavily yet—the technology isn’t ready for most applications

Do start planning your post-quantum cryptography migration

Stay skeptical of headlines

The quantum future is coming. It’s just a marathon, not a sprint.

Want to Go Deeper?

If this whetted your appetite and you want to understand the technical details, here are some excellent resources:

Video explainer: For a visual deep-dive into how quantum computers actually work, this YouTube explainer does an excellent job breaking down the concepts.

Podcasts for staying current:

Quantum Tech Updates — Great for tracking industry developments

The New Quantum Era — Innovation in quantum computing science and technology

If you found this useful, subscribe for more deep dives on AI, system design, and the technologies that actually matter for building production systems. Questions or corrections? Drop them in the comments.

The "harvest now, decrypt later" framing is what finally made this click for me. Nobody cares about breaking today's SSH keys in 2035, but goverment cables or biotech IP? Yeah, thats getting stored.

One q: you mention hybrid encryption during transition. Isnt that gonna tank performance? Like, doubling the overhead for key exchange seems rough for latency-sensitive apps. Wondering if the practical move is just rip-the-bandaid and go full PQC once CRYSTALS libraries stabilize.